Part 1 of MythBusters busted the myth of unstable tests. Part 2 here smashes another myth: “UI tests are slow.” Far be it from me to dispute that UI tests are slower than unit or integration tests, but let me show you the best practices that speed up UI tests.

Let’s first consider the steps you’d take for a typical, simple test:

- Log in to the system.

- Prepare the data for the test.

- Validate that the used data appears as required.

To execute a slow test with Selenium, you’d take these three steps:

- To log in, you must navigate to the home page, click the login icon, and type in your credentials.

- To prepare the data for the test, you must create the data in the UI.

- To validate the data, you must take a few steps.

Depending on the speed of the network and database usage, such a typical test at Cloudinary takes 20-30 seconds.

SETUP TEST DATA VIA API AND NOT UI

However, that same test in our test suite takes only 10 seconds, a 50-percent reduction in time spent. That might seem insignificant, but if there are thousands of tests, those seconds add up, resulting in many expensive minutes. Here are the steps we take to accelerate the process:

- For logins, we use an authentication API so that we are automatically logged in to the system after navigating to the home page.

- For data preparation, we also use an API. For example, in our automation framework, we upload media assets with SDK calls.

- For validation, we follow the same steps as those in the “long” test.

As to the parallelism of test execution, many frameworks offer parallel execution out of the box. To prepare for that, we do the following:

-

Write independent tests. Our tests must be independent. Dependent tests might run in parallel, but they would fail due to the dependencies. For example, in case of parallel execution, Test 2 might run before Test 1, but if Test 1 depends on Test 2, Test 1 will fail.

-

Use parallel test runners. We must prepare solutions for continuous integration (CI) by leveraging free tools like Selenium Grid and Selenoid, or paid solutions. We can also run tests in parallel with the framework without any third-party software.

-

Aim for cost-effectiveness. Because parallel execution requires more CPU and memory, we must boost the performance of the executing machines, i.e., find the sweet spot between lab size and test execution time.

At Cloudinary, we use five instances of AWS and execute 10 parallel test runners.

-

Optimize the test size. Another important factor that affects time performance is the test size. Tests should be short and should check only what is required. In manual testing, we can check many details along the way, e.g., the login process, links, colors, fonts, etc. However, in an automated test, we need to check only one thing.

Also, remember that not every manual test needs to be automated. In case of bugs, if your automation framework is built correctly, you will see only the failures that relate to those bugs, which is a big help for debugging.

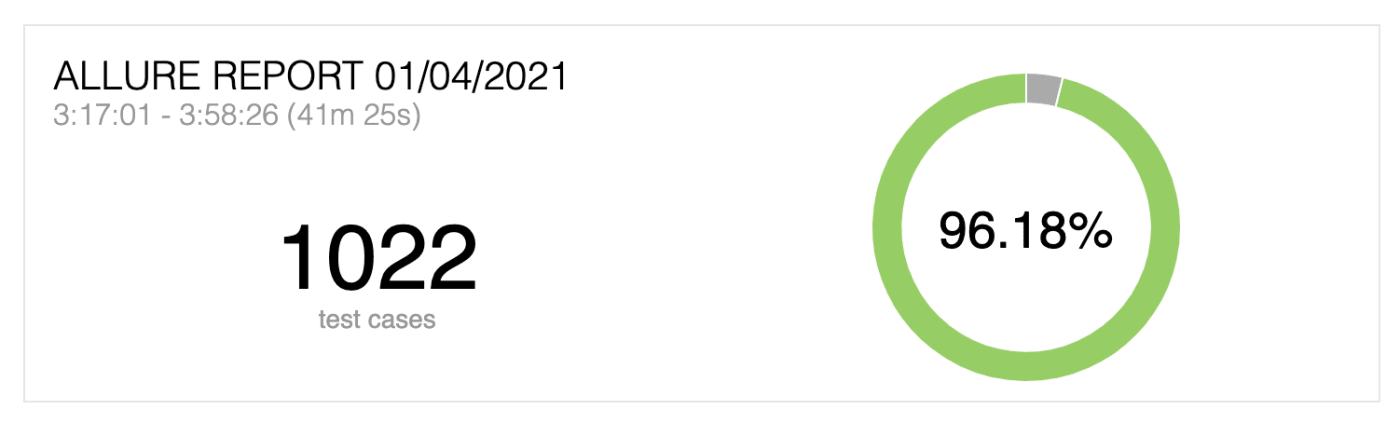

At Cloudinary, we follow all the rules described above for over 1,000 automated tests with a total execution time of about 40 minutes. We don’t stop there, however, and will continue to look for ways to save even more time in automated tests.

At Cloudinary, we follow all the rules described above for over 1,000 automated tests with a total execution time of about 40 minutes. We don’t stop there, however, and will continue to look for ways to save even more time in automated tests.