Adaptive bitrate streaming

Last updated: Mar-10-2026

Adaptive bitrate streaming is a video delivery technique that adjusts the quality of a video stream in real time according to detected bandwidth and CPU capacity. This enables videos to start quicker, with fewer buffering interruptions, and at the best possible quality for the current device and network connection, to maximize user experience.

Cloudinary can automatically generate and deliver HLS or MPEG-DASH adaptive bitrate streaming videos at the required quality levels, including generating all of the required files from a single original video asset.

Adaptive bitrate streaming in Next.js video tutorial

Watch this video to learn how to add adaptive bitrate streaming to a Next.js application using the CldVideoPlayer component from Next Cloudinary.

This video is brought to you by Cloudinary's video player - embed your own!

Use the controls to set the playback speed, navigate to chapters of interest and select subtitles in your preferred language.

Tutorial contents

How adaptive bitrate streaming works

When adaptive bitrate streaming is applied, then as a video begins to download, the client can play the first few seconds of the movie at a low quality to get started quickly. When the user's video buffer is full and assuming CPU utilization is low, the client player may switch to a higher quality stream to enhance the viewing experience. If the buffer later drops below certain levels, or CPU utilization jumps above certain thresholds, the client may switch to a lower quality stream.

At the start of the streaming session, the client software downloads a master playlist file containing the metadata for the various sub-streams that are available. The client software then decides what to download from the available media files, based on predefined factors such as device type, resolution, current bandwidth, size, etc.

To deliver videos using adaptive streaming, you need multiple copies of your video prepared at different resolutions, qualities, and data rates. These are called video representations (also sometimes known as variants). You also need index and streaming files for each representation. The master file references the available representations, provides information required for the client to choose between them, and includes other required metadata, depending on the delivery protocol.

Cloudinary can automatically generate and deliver all of these files from a single original video, transcoded to either or both of the following protocols:

- HTTP Live Streaming (HLS)

- Dynamic Adaptive Streaming over HTTP (MPEG-DASH)

See also: HTTP Live Streaming feature overview.

Delivering adaptive bitrate streaming videos

To deliver videos from Cloudinary using HLS or MPEG-DASH, you can either let Cloudinary automatically choose the best streaming profile, or manually select your own.

Automatic streaming profile selection

The easiest way to deliver videos from Cloudinary using HLS or DASH adaptive bitrate streaming is to let Cloudinary choose the best streaming profile on the fly. This includes support for 4k output by setting the maximum resolution.

Set the streaming_profile parameter to auto (sp_auto in URLs), and specify an extension of either .m3u8 for HLS or .mpd for DASH. Automatic streaming profile selection uses the CMAF format and your video will be processed for streaming using either of the protocols on first request.

Here's an example using HLS:

- Depending on your browser, you may not be able to play the streamed video without the use of a video player that supports adaptive bitrate streaming. The video above is displayed using the Cloudinary video player.

- When requesting a DASH stream, you will receive a 423 response until the video has been processed.

- Currently, a limited set of video transformations are supported for use together with

sp_auto. See examples of transformations that you can apply in Combining transformations with automatic streaming profile selection.

See full syntax: sp_auto in the Transformation Reference.

Setting the maximum resolution

To optimize bandwidth usage and give you better expense predictability when using sp_auto, you can limit the resolution of the streamed video.

The default maximum resolution is 1080p, but you can also choose from these other options: 2160p, 1440p, 720p, 540p, and 360p.

For example, setting the maximum resolution to 360p (sp_auto:maxres_360p):

Or, for 4K delivery (sp_auto:maxres_2160p):

- The possible values for

maxresrefer to the shorter edge of the video. For example, 720p is 720 pixels by 1280 pixels, regardless of the orientation of the video (portrait or landscape). - When setting

maxresto2160pfor 4K output or1440pfor 2K, the processing of all renditions is asynchronous and you receive a423response on initial request. - The input resolution also limits the final delivered resolution, no upscaling occurs when the input is lower than the value of

maxres.

Defining alternate audio tracks

When using automatic streaming profiles, you can take advantage of defining alternate audio tracks that will be added to your generated manifest file. This allows you to enhance accessibility, support multiple languages, or offer unique audio experiences such as descriptive soundtracks. When delivered using a compatible video player (such as the Cloudinary Video Player), users will be able to select their preferred audio track from the UI.

To define additional audio tracks, use the audio layer transformation (l_audio) with the alternate flag (fl_alternate). This flag takes two additional parameters used to describe the language of the audio track and the name that's displayed in the UI:

-

lang- the IETF language tag and optional regional tag. For examplelang_en-US. -

name- An optional name for the audio track that will be shown in the player UI. For examplename_Description. If no name is provided, the name will be inferred from the language.

Here's an example showing a video that now has an alternate instrumental audio track as well as the original vocal track:

The transformation is broken down as follows:

-

fl_alternate:lang_en;name_Original,l_audio:outdoors- adds the original audio from the video using the same public ID (outdoors). The alternate flag is added with the language set toenand the name set toOriginal. -

fl_alternate:lang_en;name_Instrumental,l_audio:docs:instrumental-short- adds another audio layer, setting the language again toenbut this time with the nameInstrumental. -

sp_auto- applies automatic streaming profile selection.

And when delivered using the Cloudinary Video Player, the audio selection menu is visible:

- The

fl_alternateflag is not currently supported by our SDKs. - All audio tracks must be added as a layer, including the original track, otherwise the first audio layer will be used as the default.

-

Audio transformations can be applied to an audio layer as normal, for example to adjust the volume of a particular track (

e_volume).

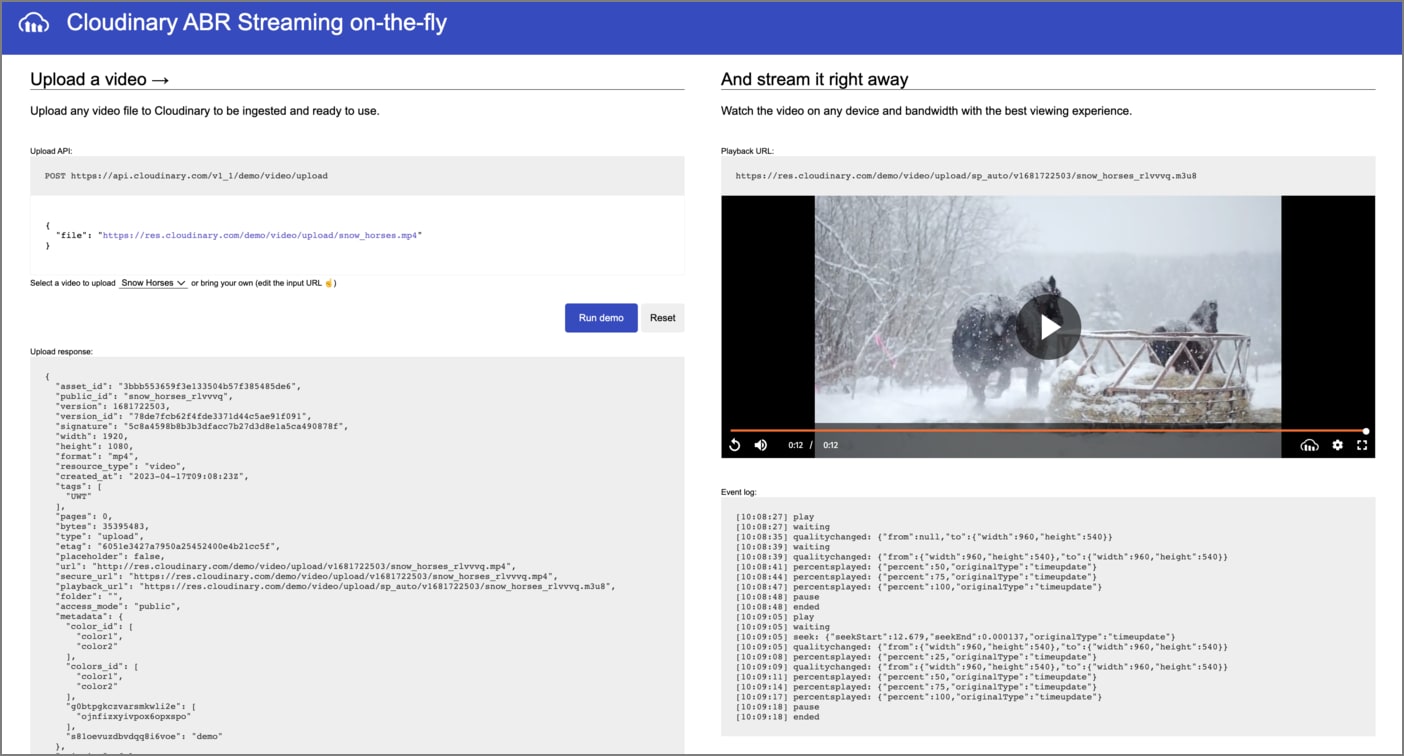

Interactive demo: automatic streaming profile selection

See various videos being streamed using automatic streaming profile selection in this interactive demo.

Combining transformations with automatic streaming profile selection

When combining automatic streaming profile selection (sp_auto) with other transformations, you cannot include those transformations in the same component as sp_auto, so they need to be chained.

Here are some examples of sp_auto with chained transformations:

Trimming

The following example shows a 21 second video trimmed down to 7 seconds, starting from second 3 (so_3.0), and finishing at second 10 (eo_10.0):

Adding image overlays

The following example shows the Cloudinary logo overlaid on the video (l_cloudinary_icon_blue) in the top-left corner (fl_layer_apply,g_north_west):

Adding subtitles

You can add subtitles to videos as explained in Adding subtitles to HLS videos. For example, the text specified in the file with public ID docs/narration.vtt is used for US English subtitles (sp_auto:subtitles_((code_en-US;file_docs:narration.vtt))) with this video:

Normalizing audio

You can normalize the audio in videos with the e_volume:auto parameter. This ensures consistent volume levels across different sections of the same video. In the following example of mixed videos with different volume levels, the audio is normalized to reduce the difference in volume between the loud sections and the quiet sections:

-

e_volume:autois specific to videos delivered withsp_auto. - Currently, this is the only volume option that you can use with

sp_auto.

Manual streaming profile selection

To deliver videos with Cloudinary using HLS or MPEG-DASH adaptive bitrate streaming:

- Select a predefined streaming profile

- Upload your video with an eager transformation

- Deliver the video

Step 1. Select a streaming profile

Cloudinary provides a collection of predefined streaming profiles, where each profile defines a set of representations according to suggested best practices. Select a predefined profile based on your performance and codec requirements.

For example, the 4k profile creates 9 different representations in 16:9 aspect ratio, from extremely high quality to low quality, while the sd profile creates only 3 representations, all in 4:3 aspect ratio. Other commonly used profiles include the hd and full_hd profiles. You can also select a profile that uses a more advanced codec such as h265 or vp9, these profiles create the same representations but with slightly different settings.

Below is the list of predefined streaming profiles for the h264, h265, vp9 and av1 codecs:

| Codec | Profiles | Supported for |

|---|---|---|

| h264 |

4k, full_hd, full_hd_wifi, full_hd_lean, hd, hd_lean, sd

|

HLS, DASH |

| h265 |

4k_h265, full_hd_h265, full_hd_wifi_h265, full_hd_lean_h265, hd_h265, hd_lean_h265, sd_h265

|

HLS, DASH |

| vp9 |

4k_vp9, full_hd_vp9, full_hd_wifi_vp9, full_hd_lean_vp9, hd_vp9, hd_lean_vp9, sd_vp9

|

DASH |

| av1 |

4k_av1, full_hd_av1, full_hd_wifi_av1, full_hd_lean_av1, hd_av1, hd_lean_av1, sd_av1

|

DASH |

To view a detailed list of settings for each representation, see Predefined streaming profiles.

If none of the predefined profiles exactly answers your needs, you can also optionally define custom streaming profiles or even fine-tune the predefined options. You might want a different number of representations, different divisions of quality, different codecs or a different aspect ratio. Or, you might want to apply special transformations for different representations within the profile.

For example, if you want to make use of the more advanced video encoding provided by the vp9 and h265 codecs, or audio encoding provided by the opus codec, you can create your own streaming profiles (or update the predefined ones). You can use the h265 codec with both HLS and MPEG-DASH, however the vp9 and opus codecs can only be used with MPEG-DASH. You can combine codecs when creating your streaming profiles to ensure the widest support across different browsers and devices.

Use the streaming_profiles method of the Admin API to create, update, list, delete, or get details of streaming profiles.

Step 2. Upload your video with an eager transformation

A single streaming profile is comprised of many derived files, so it can take a while for Cloudinary to generate them all. Therefore, when you upload your video (or later, explicitly), you should include eager, asynchronous transformations with the required streaming profile and video format.

You can even eagerly prepare your videos for streaming in both formats and you can include other video transformations as well. However, make sure the streaming_profile is provided as a separate component of chained transformations.

For example, this upload command encodes the handshake.mp4 video to HLS format using the full_hd streaming profile:

The eager transformation response includes the delivery URLs for each requested encoding.

See more adaptive streaming upload examples.

Derived adaptive streaming files

When the encoding process is complete for all representations, the derived files will include:

HLS

- A fragmented video streaming file (.ts) 1 for each representation

- An index file (.m3u8) of references to the fragments for each representation

- A single master playlist file (.m3u8) containing references to the representation files above and other required metadata

DASH

- A fragmented video streaming file (.mp4dv) for each representation

- An audio stream file (.mp4da) for each representation

- An index file (.mpd) containing references to the fragments of each streaming file as well as a master playlist containing references to the representation files above and other required metadata

1 If working with HLS v.3, a separate video streaming file (.ts) is derived for each fragment of each representation. To work with HLS v.3, you need a private CDN configuration and you need to add a special hlsv3 flag to your video transformations.

Step 3. Deliver the video

After the eager transformation is complete, deliver your video using the .m3u8 (HLS) or .mpd (MPEG-DASH) file format (extension) and include the streaming_profile (sp_<profilename>) and other transformations exactly matching those you provided in your eager transformation (as per the URL that was returned in the upload response).

For example, the delivery code for the video that was uploaded in Step 2 above is:

See full syntax: sp_<profile name> in the Transformation Reference.

To ensure that your encoded video can adaptively stream in all environments, you can embed the Cloudinary Video Player in your application. The Cloudinary video player supports video delivery in both HLS and MPEG-DASH.

Delivering HLS version 3

By default, HLS transcoding uses HLS v4 If you need to deliver HLS v3, add the hlsv3 flag parameter (fl_hlsv3 in URLs) when setting the video file format (extension) to .m3u8. Cloudinary will then automatically create the fragmented video files (all with a .ts extension), each with a duration of 10 seconds, as well as the required .m3u8 index files for each of the fragmented files.

Examples

Step 2 above includes a simple example for uploading a video and using a streaming profile to prepare the required files for delivering the video in adaptive streaming format. This section provides some additional examples.

Request several encodings and formats at once

Use chained transformations with a streaming profile

Encode an already uploaded video using Explicit

Eager response example

Notes and guidelines

For small videos, where all of the derived files combined are less than 60 MB, you can deliver the transformation URL on the fly (instead of as an eager transformation), but this should be used only for demo or debugging purposes. In a production environment, we recommend to always eagerly transform your video representations.

The adaptive streaming files for small videos (where all of the derived files combined are less than 60 MB) are derived synchronously if you do not set the async parameter. Larger videos are prepared asynchronously. Notification (webhook) of completion is sent to the

eager_notification_urlparameter.If you set the format to an adaptive manifest file (

m3u8ormpd) using theformatparameter, you must either set the file extension to match, or omit the extension completely. If you provide a different file extension, your video will not play correctly.By default, video sampling is per 2 seconds. You can define a custom sampling rate by specifying it as a chained transformation prior to the streaming profile. For example (in Ruby):

All the generated files are considered derived files of the original video. For example, when performing invalidate on a video, the corresponding derived adaptive streaming files are also invalidated.

If you select a predefined profile and it includes any representations with a larger resolution than the original, those representations will not be created. However, at minimum, one representation will be created.

If the aspect ratio of the original video does not match the aspect ratio of the selected streaming profile, then the

c_limitcropping transformation is applied to make the video fit the required size. If you don't want to use this cropping method, use a base transformation to crop the video to the relevant aspect ratio.If you prepare streaming for a lower-level profile, and then in the future, you want to prepare the same video with a higher-level profile, the new high-quality representations will be created, while any already existing representations that match will be reused.

Predefined streaming profiles

Each of the predefined streaming profiles will generate a number of different representations. The following sections summarize the representations that are generated for each predefined streaming profile and the settings for each representation.

Representations generated for each profile

The table below lists the available streaming profiles, the aspect ratio, and which of the representations are generated for each. The IDs in the table map to the IDs defined in the section below.

| Profile Name | Aspect Ratio | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 |

|---|---|---|---|---|---|---|---|---|---|

4k |

16:9 | + | + | + | + | + | + | + | + |

full_hd |

16:9 | + | + | + | + | + | + | ||

full_hd_wifi |

16:9 | + | + | + | |||||

full_hd_lean |

16:9 | + | + | + | |||||

hd_lean |

16:9 | + | + | + | |||||

hd |

16:9 | + | + | + | + | + | |||

sd |

4:3 | + | + | + |

4k_h265, 4k_vp9 or 4k_av1.Possible representations

| ID | Aspect Ratio | Resolution | Audio | H264 Bitrate (kbps) | H265 Bitrate (kbps) | VP9 Bitrate (kbps) | AV1 Bitrate (kbps) |

|---|---|---|---|---|---|---|---|

| 8 | 16:9 | 3840x2160 | AAC stereo 128kbps | 18000 | 12000 (30fps) 15000 (60fps) |

12000 (30fps) 15000 (60fps) |

7200 (30fps) 11000 (60fps) |

| 7 | 16:9 | 2560x1440 | AAC stereo 128kbps | 12000 | 6000 (30fps) 9000 (60fps) |

6000 (30fps) 9000 (60fps) |

4500 (30fps) 6600 (60fps) |

| 6 | 16:9 | 1920x1080 | AAC stereo 128kbps | 5500 | 3800 | 3800 | 3060 |

| 5 | 16:9 | 1280x720 | AAC stereo 128kbps | 3300 | 2300 | 2300 | 1930 |

| 4 | 16:9 | 960x540 | AAC stereo 96kbps | 1500 | 1100 | 1100 | 790 |

| 3 | 16:9 | 640x360 | AAC stereo 96kbps | 500 | 350 | 350 | 320 |

| 3(SD) | 4:3 | 640x480 | AAC stereo 128kbps | 800 | 550 | 550 | 490 |

| 2 | 16:9 | 480x270 | AAC stereo 64kbps | 270 | 200 | 200 | 190 |

| 2(SD) | 4:3 | 480x360 | AAC stereo 64kbps | 400 | 300 | 300 | 260 |

| 1 | 16:9 | 320x180 | AAC stereo 64kbps | 140 | 100 | 100 | 90 |

| 1(SD) | 4:3 | 320x240 | AAC stereo 64kbps | 200 | 150 | 150 | 140 |

Manually creating representations and master files

If you have a special use case that does not enable you to use the built-in streaming profiles to automatically generate master playlist file and all the relevant representations, you can still have Cloudinary create the index and streaming files for each streaming transformation you define, and then you can create the master playlist file manually.

Step 1. Upload with eager transformations per representation

When you upload your video, provide eager transformations for each representation (resolution, quality, data rate) that you want to create. Also make sure to define the format for each transformation as .m3u8 (HLS) or .mpd (DASH) in a separate component of the chain.

When you define the format of your transformation as .m3u8 or .mpd, Cloudinary automatically generates the following derived files:

- A fragmented video streaming file (

.ts1 or.mp4dv) - An index file (

.m3u8or.mpd) of references to the fragments - For DASH only: an audio stream file (

.mp4da)

For example:

1If working with HLS v.3, a separate video streaming file (.ts) is derived for each fragment of each representation. To work with HLS v.3, you need a private CDN configuration and you need to add a special hlsv3 flag to your video transformations.

Step 2. Manually create the master playlist file

If you're not using streaming profiles, you need to create your master playlist file manually.

HLS Master Playlist (m3u8)

Each item in the m3u8 master index file points to a Cloudinary dynamic transformation URL, which represents the pair of auto-generated m3u8 index and ts video files created in the preceding step.

For example, the following mobile targeted m3u8 master playlist file contains information on two versions of the dog.mp4 video, one higher resolution and the other lower resolution for poorer bandwidth conditions:

MPEG-DASH Master Playlist

The .mpd master file is an XML document. For details on creating the .mpd master file, see: The Structure of an MPEG-DASH MPD.

Adding subtitles to HLS videos

In addition to adding subtitles as a layer, you can define subtitles for HLS videos to be referenced from the generated manifest file. This allows for multiple subtitle tracks to be included and will allow users to switch between subtitles from the video player they are using. To add subtitles to HLS videos, use the streaming profile parameter (sp) with an additional subtitles parameter that defines the vtt files to use for subtitles.

For example:

The subtitles parameter is defined using Cloudinary specific URL syntax that allows you to specify parameters of a JSON-like object in the URL. To convert a JSON object representing the subtitles to a Cloudinary URL syntax string, you can apply the following substitutions:

-

(- Start of object or array of objects -

)- End of object -

;- Separator between values object -

_- Key/value separator in an object

Taking our above example, the JSON equivalent would be:

Reference

Syntax

sp_sd:subtitles_((code_<language code>;file_<subtitles file>))

You can supply multiple subtitles tracks in the same subtitles parameter by appending additional sets of configuration surrounded by parentheses and separated with a semicolon. For example:

sp_sd:subtitles_((code_<language code>;file_<subtitles file>);(code_<language code2>;file_<subtitles file2>);(code_<language code3>;file_<subtitles file3>))

Parameters

The available parameters you can use with subtitles are:

| Value | Type | Description |

|---|---|---|

| language code | string | The language code for the subtitles track, e.g., en-US for English (United States) subtitles. |

| subtitles file | string | The public ID and file extension for the subtitles file, e.g., outdoors.vtt. |

raw_transformation parameter.In addition to the commonly used video transformation options described on this page, the following pages describe a variety of additional video transformation options:

- Apply a video transformation only if a specified condition is met.

- Use arithmetic expressions and variables to add additional sophistication and flexibility to your transformations.

- Convert videos to animated images.

- Stream or control the audio of audio or video files.

The Transformation URL API Reference details all transformation parameters available for both images and videos. Icons indicate which parameters are supported for each asset type.

The Video solution section provides a single, comprehensive resource where you can explore all the valuable features Cloudinary offers for your video use cases.

Ask AI

Ask AI